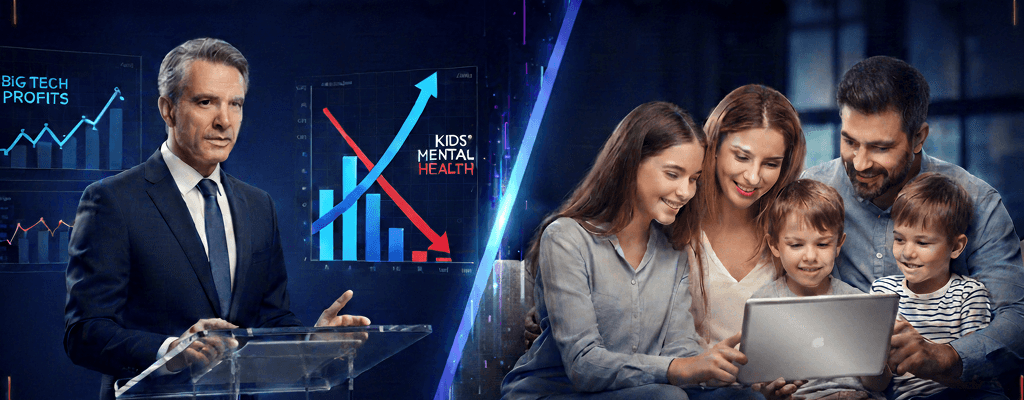

The recent work by lawmakers like Michigan State Senator Mallory McMorrow is a major step forward for kids’ online safety. By challenging “Big Tech” on addictive designs and new AI tools, they are doing something vital: they are raising awareness and starting a conversation that is long overdue.

As someone who has spent years developing technology to protect children, I believe we have a unique opportunity to turn this momentum into real, lasting protection. While it is tempting to focus primarily on automatic “off switches” provided by platforms, our experience shows that to truly protect children, we must direct our legislative focus toward two critical areas:

1. Keeping Kids in Visible Digital Spaces When regulation focuses only on blocking or over-restricting mainstream platforms, we risk an unintended consequence: driving children into “darker,” unmonitored corners of the web. Kids are digital natives; if they feel disconnected, they will find alternatives. Our goal should be to keep children in digital spaces where we have the tools to monitor and supervise them, rather than pushing them toward hidden apps that are beyond any parental reach.

2. Moving from Automatic Rules to Active Involvement Relying on platforms to provide “automatic” safety features is a good first step, but it is rarely enough. Tech-savvy kids can often find ways around these built-in restrictions. Real, long-term safety comes from active parental involvement. Legislation has the power to move beyond simple mandates for tech companies and instead create an environment where parents are equipped to lead the way.

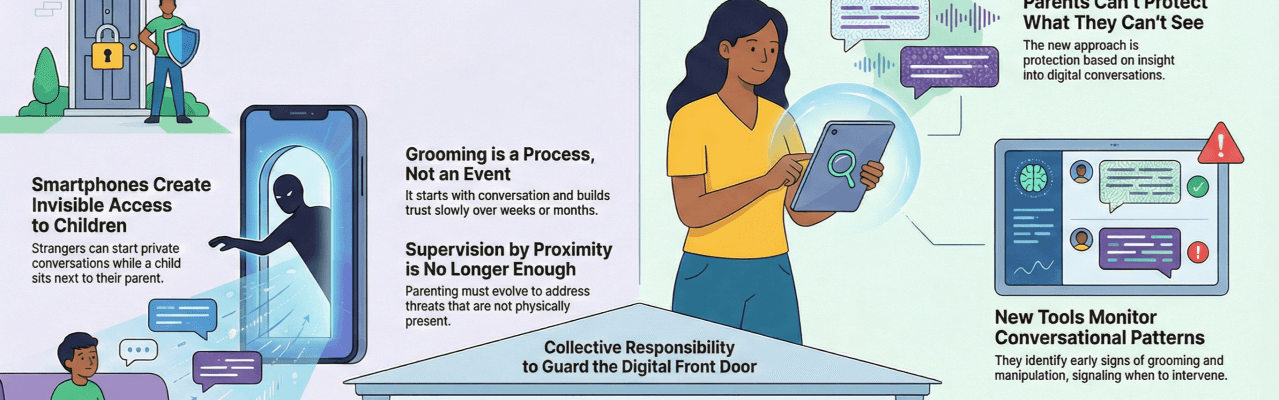

The Technical Priority: Bridging the “Onboarding” Gap

To truly empower parents, we need to address the technical hurdles they face every day on the devices their children use. While social media often takes the spotlight, the platform gatekeepers, Google and Apple, hold the keys to making safety tools accessible and effective.

- Android: Currently, safety apps require a long and complex list of permissions. This creates a difficult “onboarding” process. Many parents feel overwhelmed by technical warning messages and give up, leaving their children without protection.

- Apple (iOS): On the other side, Apple’s restrictive environment often blocks the very APIs that safety services need to help parents monitor activity. While privacy is a core value we all share, it should not be a barrier that prevents parents from keeping their children safe.

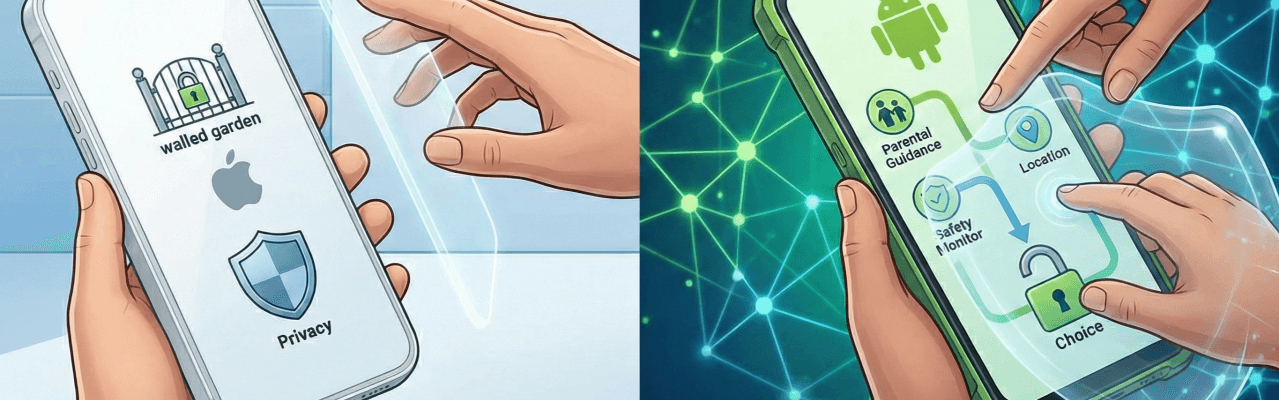

A Path Forward for Lawmakers

The most significant impact a law can have is not just penalizing platforms, but ensuring that the infrastructure exists for parents to stay involved. We encourage regulators to focus on:

- Infrastructure for Safety: Requiring Google and Apple to provide a simple, clear, and verified setup process for legitimate child safety services.

- Support for Monitoring: Ensuring that safety tools can provide parents with the context they need to have honest, open conversations with their kids.

- Simplifying the Choice: Making it easy for a parent, regardless of their technical skill, to install and manage protection.

We don’t need the platforms to raise our children for us. We need the legislation to ensure we have the tools to raise them ourselves in a digital world.